Hi T,

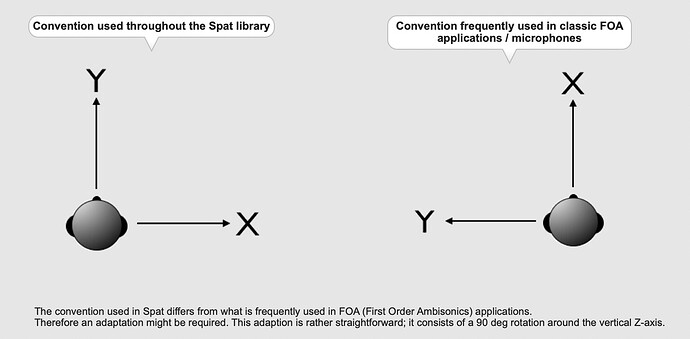

Yes, the 90 deg rotation does work but I would need several instances of it due to my working with a modular approach.

This involves mono/stereo encoders, with early reflections from a cuboidal “room” feeding a global reverberation engine. Each has its own control panel. These are used as bpatchers to display a number of sources simultaneously, along with Midi or OSC external control. I am also trying to incorporate a convincing distance model for sounds inside and outside the nominally unity distance speaker array.

Similarly, the decoding is controlled by bpatchers, one for each speaker, though there is only one decoder object.

There is also a graphic display of the “room”, sources and loudspeakers built with Jitter. Another different coordinate system.

I’m using 16 inputs and outputs as something reasonable for 3rd order ambisonics in terms of user hardware, and for proof of concept. As it is modular it would then be expandable fairly simply.

This is all based on a long standing 1st order ambisonic version, which works well. The above mentioned distance model worked well there, especially as the XYZ components all go to zero as xyz coordinates go to zero. W was increased inside the unit sphere according to Gerzon’s equation. Outside the unit sphere the directional coding was maintained, and the amplitude diminished according to the inverse square law (1/r), the amount being scalable. The reverb send varies as 1/sqrt r, as suggested by Chowning.

With 3rd order I started from the basic encoding equations, though this led to rather large processing load when dealing with the number of inputs and outputs. This led to exploring the ICST and Spat~ objects. I had found the latter to be much more efficient when attempting an amplitude/delay panning project (Delta Stereophony, roughly equivalent to simplified WFS). Additionally appealing is being able to use simpler and shorter /sources lists, without having to specify source number and send as separate messages.

Neither of these really seem to deal adequately with distance, and the directional components do not go to zero at centre zero, meaning that the audible result was not central. My solution is to fade out first and higher order components as zero is approached, and increase W to compensate for the loss in volume level.

Additionally, when attempting to produce an early reflection send signal from a cuboidal room, signals cancel along the xyz axes as they are equal and opposite polarity. In the centre at zero they default to a direction along one axis or cancel completely. A solution seemed to lie in deriving A-format signals, as suggested in several papers (e.g.Wiggins), working with those and encoding to 2nd (or possibly even 3rd) order at the end before decoding.

A lot of ambisonic plug-ins only let one pan sound around at a fixed distance, whereas most sound sources travel in straight lines or ways that can be approximated by a succession of straight lines.

Of course, there is a problem in that ambisonics is essentially dimensionless. This arises in calculating delay compensation in loudspeaker arrays, and in simulating the Doppler effect. The delay is related to the ‘real’ distance in meters or some other defined dimension.

Many years gone I was able to make plug-ins with Max 4. Some will still work in Reaper, though the user interface has gone. I’m not a proper programmer, and cannot author my own plug-ins. Most of my audio work is done in ProTools, though I do use Logic to work with some clients. Logic is crippled for use with ambisonics. I occasionally use Reaper. Cubase and (better) Nuendo are good alternatives with a decent architecture, but how many DAWs does one need, can afford, and can be good at using. Many times, when forced to use Logic, I feel that I could have finished the job in ProTools by the time I’ve trawled through the Logic manual and gone online to work out how to do what I need to.

Hence my interest in ProTools plug-ins, as I would need to use those to ease production. Those available (apart from the Blue Ripple ones) do not provide any loudspeaker decoders, just binaural ones. Hence my desire to be able to build one that works simply. I can, of course, send audio from ProTools via Soundflower or Loopback to Max, encode there and record the B-Format or loudspeaker feed signals. However, if I need to record back into ProTools, or import this recording into it, I have to ensure that it is spatially compatible.

“Reverse engineering the mathematical formulae” seems the most efficient way of doing this.

Incidentally, I have been using the trial versions of the Noise Makers and Dear Reality plug-ins, and the free 03 Core Blue Ripple ones. The latter only allows rotation, but the other two will pan freely.

Ciao,

Dave